UPDATE

A fuller development of the Arecibo Ascii code is now available at http://uscii.hostilefork.com/

Every programmer who knows English is aware of the ASCII code, which declares that 65 means "A" and 66 means "B", etc. Yet there is nothing intrinsically "A-like" about the number 65 (binary: 1000001), nor anything B-like about the number 66 (binary: 1000010). To see that, just imagine living in the 1800s and this fell from the sky on a piece of paper:

1000001100001010000101000001

Even if you knew it was supposed to represent text, I think it would be impossible to read that as "ABBA" with any degree of confidence. You might be able to get a clue that 7-bit sections were significant if you had a large body of data and realized they were always multiples of seven in length, but any single signal like this would not be enough. You'd be in an especially bad position if you didn't know anything about alphabetical order (which isn't a strict prerequisite of being able to read or write English successfully)!

To address this, I created something called "Arecibo ASCII". It's named after the infamous Arecibo message--a binary sequence transmitted into space that tried to explain some things about humanity. The goal was to make as few assumptions about the receiving aliens as possible...only that they had an understanding of physics and math (and obviously, the ability to detect electromagnetic waves).

When Carl Sagan and Frank Drake composed the message, they took it to Richard Feynman without explaining to him what it was. They figured if Feynman couldn't decode it--given his upper hand of already knowing Earth science--then the aliens wouldn't have a chance! Luckily, Feynman got pretty much all of it.

In the spirit of that test, I'll send you an "Arecibo ASCII" message before I tell you how it works! :)

111111111111111111111111111111111110111111111111111111111111111111111110111111111111111111111111111111111110111111111111111111111111111111111110111111111111111111111111111111111110100011000110001111111000110001100010000000000001110100011000110001011100000000000001111100000111000001111100001000010011111001000010000101000100000000010000000001000010000100001000011000010000100001000010000100011100000000000001110100011111110000011110000000000000000000000000000000000000111111000010000111101000010000100000000000000001110100011000110001011100000000000010110110011000010000100000010000100001001010100110001010010010111111111111111111111111111111111110111111111111111111111111111111111110111111111111111111111111111111111110111111111111111111111111111111111110111111111111111111111111111111111110111111111111111111111111111111111110111111111111111111111111111111111110

Want to test your alien-codebreaking-savvy? See if you can figure out what that says before you read the rest of the article! Otherwise, just read on as I spill the beans...

Symbols Of Prime Importance

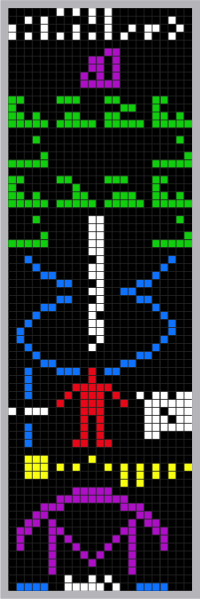

The Arecibo message was transmitted as a straight sequence of binary digits. When you laid it out in a two-dimensional grid, you got a picture (the color is for instructional purposes only, it was effectively a 2-color bitmap):

The signal was chosen to be 1679 bits long, and this number was chosen for a reason. It is a semiprime...meaning it is a product of two primes (23 and 73). Thus, if you got the idea in your head to decode the bits as an image, there are only two natural ways of forming a rectangle. One as a series of 73 rows with 23 bits per row, or as a series of 23 rows with 73 bits per row.

I decided that Arecibo ASCII would use semiprimes as well. There's a magic number "35" hinted at in the bit pattern of my sample message, because every 36th bit is a zero. Also, it is prefixed and postfixed by unary expressions of the number 35. (There's no upper limit on how many you can tack on--arguably the more certain you want to hint at the "magic" of 35 in the message, the more you would want to add. But to meet the specification, a string merely needs one at the head and one at the end.)

So we have the mysterious appearance of 35, and the fact that the message length is always a multiple of 36. I'd say it's fairly natural to want to divide the message into segments of 36 bits. Then the zero that appears periodically every 36th bit can be assumed as some kind of delimiter...and probably not relevant to the message. So doing that to my transmission, we get:

11111111111111111111111111111111111 0 11111111111111111111111111111111111 0 11111111111111111111111111111111111 0 11111111111111111111111111111111111 0 11111111111111111111111111111111111 0 10001100011000111111100011000110001 0 00000000000111010001100011000101110 0 00000000000111110000011100000111110 0 00100001001111100100001000010100010 0 00000001000000000100001000010000100 0 01100001000010000100001000010001110 0 00000000000111010001111111000001111 0 00000000000000000000000000000000000 0 11111100001000011110100001000010000 0 00000000000111010001100011000101110 0 00000000001011011001100001000010000 0 01000010000100101010011000101001001 0 11111111111111111111111111111111111 0 11111111111111111111111111111111111 0 11111111111111111111111111111111111 0 11111111111111111111111111111111111 0 11111111111111111111111111111111111 0 11111111111111111111111111111111111 0 11111111111111111111111111111111111 0

Now we have 35 bits in each "unit". If we're anything like the alien scientists they hoped would receive the Arecibo message, then the semiprime number might lead us to make the leap toward decoding it as a rectangular grid. Further, we are given a bit of a hint by the blocks of all-1s...five at the beginning and seven at the end. :) Let's try laying it out in 7x5...

1000110 0011000 1111111 0001100 0110001

That's not anything too recognizable. How about the other way?

10001 10001 10001 11111 10001 10001 10001

That looks a lot like a letter "H" in a 5x7 bitmap font. Taking the next 35-bit series in the same way:

00000 00000 01110 10001 10001 10001 01110

You might say that's a lowercase "o", right? That's because in true blog vanity I made the first Arecibo ASCII message say "Hostile Fork". If you figured it out without reading the explanation first, then congratulations...and let me know about your experience!

The Arecibo ASCII Table Version 0.1 Alpha

Here's a first crack at an Arecibo ASCII table:

| ASCII | Character | Arecibo ASCII (35-bit binary) |

| 10 | (return) | 00001000010010101101111110110000100 |

| 32 | (space) | 00000000000000000000000000000000000 |

| 33 | ! | 00100001000010000100000000000000100 |

| 34 | " | 01010010100101000000000000000000000 |

| 35 | # | 01010010101111101010111110101001010 |

| 36 | $ | 00100011111010001110001011111000100 |

| 37 | % | 11000110010001000100010001001100011 |

| 38 | & | 01100100101010001000101011001001101 |

| 39 | ' | 01100001000100000000000000000000000 |

| 40 | ( | 00010001000100001000010000010000010 |

| 41 | ) | 01000001000001000010000100010001000 |

| 42 | * | 00000001001010101110101010010000000 |

| 43 | + | 00000001000010011111001000010000000 |

| 44 | , | 00000000000000000000011000010001000 |

| 45 | - | 00000000000000011111000000000000000 |

| 46 | . | 00000000000000000000000000110001100 |

| 47 | / | 00000000010001000100010001000000000 |

| 48 | 0 | 01110100011001110101110011000101110 |

| 49 | 1 | 00100011000010000100001000010001110 |

| 50 | 2 | 01110100010000100010001000100011111 |

| 51 | 3 | 11111000100010000010000011000101110 |

| 52 | 4 | 00010001100101010010111110001000010 |

| 53 | 5 | 11111100001111000001000011000101110 |

| 54 | 6 | 00110010001000011110100011000101110 |

| 55 | 7 | 11111000010001000100010000100001000 |

| 56 | 8 | 01110100011000101110100011000101110 |

| 57 | 9 | 01110100011000101111000010001001100 |

| 58 | : | 00000011000110000000011000110000000 |

| 59 | ; | 00000011000110000000011000010001000 |

| 60 | < | 00010001000100010000010000010000010 |

| 61 | = | 00000000001111100000111110000000000 |

| 62 | > | 01000001000001000001000100010001000 |

| 63 | ? | 01110100010000100010001000000000100 |

| 64 | @ | 01110100010000101101101011010101110 |

| 65 | A | 01110100011000110001111111000110001 |

| 66 | B | 11110100011000111110100011000111110 |

| 67 | C | 01110100011000010000100001000101110 |

| 68 | D | 11100100101000110001100011001011100 |

| 69 | E | 11111100001000011110100001000011111 |

| 70 | F | 11111100001000011110100001000010000 |

| 71 | G | 01110100011000010111100011000101111 |

| 72 | H | 10001100011000111111100011000110001 |

| 73 | I | 01110001000010000100001000010001110 |

| 74 | J | 00111000100001000010000101001001100 |

| 75 | K | 10001100101010011000101001001010001 |

| 76 | L | 10000100001000010000100001000011111 |

| 77 | M | 10001110111010110101100011000110001 |

| 78 | N | 10001100011100110101100111000110001 |

| 79 | O | 01110100011000110001100011000101110 |

| 80 | P | 11110100011000111110100001000010000 |

| 81 | Q | 01110100011000110001101011001001101 |

| 82 | R | 11110100011000111110101001001010001 |

| 83 | S | 01111100001000001110000010000111110 |

| 84 | T | 11111001000010000100001000010000100 |

| 85 | U | 10001100011000110001100011000101110 |

| 86 | V | 10001100011000110001100010101000100 |

| 87 | W | 10001100011000110001101011010101010 |

| 88 | X | 10001100010101000100010101000110001 |

| 89 | Y | 10001100011000101010001000010000100 |

| 90 | Z | 11111000010001000100010001000011111 |

| 91 | [ | 01110010000100001000010000100001110 |

| 92 | \ | 00000100000100000100000100000100000 |

| 93 | ] | 01110000100001000010000100001001110 |

| 94 | ^ | 00100010101000100000000000000000000 |

| 95 | _ | 00000000000000000000000000000011111 |

| 96 | ` | 01000001000001000000000000000000000 |

| 97 | a | 00000000000111000001011111000101111 |

| 98 | b | 10000100001000011110100011000111110 |

| 99 | c | 00000000000111110000100001000001111 |

| 100 | d | 00001000010000101111100011000101111 |

| 101 | e | 00000000000111010001111111000001111 |

| 102 | f | 00010001010010001110001000010000100 |

| 103 | g | 00000000000111110001011110000111110 |

| 104 | h | 10000100001000011110100011000110001 |

| 105 | i | 00000001000000000100001000010000100 |

| 106 | j | 00010000000001000010000101001001100 |

| 107 | k | 01000010000100101010011000101001001 |

| 108 | l | 01100001000010000100001000010001110 |

| 109 | m | 00000000001101110101101011010110001 |

| 110 | n | 00000000001011011001100011000110001 |

| 111 | o | 00000000000111010001100011000101110 |

| 112 | p | 00000000001111010001111101000010000 |

| 113 | q | 00000000000111110001011110000100001 |

| 114 | r | 00000000001011011001100001000010000 |

| 115 | s | 00000000000111110000011100000111110 |

| 116 | t | 00100001001111100100001000010100010 |

| 117 | u | 00000000001000110001100011000101110 |

| 118 | v | 00000000001000110001100010101000100 |

| 119 | w | 00000000001000110001101011010101010 |

| 120 | x | 00000000001000101010001000101010001 |

| 121 | y | 00000000001000101010001000010001000 |

| 122 | z | 00000000001111100010001000100011111 |

| 123 | { | 00011001000010001000001000010000011 |

| 124 | | | 00100001000010000000001000010000100 |

| 125 | } | 11000001000010000010001000010011000 |

| 126 | ~ | 00001011101000000000000000000000000 |

Bear in mind though it may seem like "just a 5x7 font", the important bit is that everyone writing documents use the same values. The Arecibo ASCII "A" equates to the decimal value 15,621,226,033 and looks like this:

01110

10001

10001

10001

11111

10001

10001

I've argued that this number is much more "A-like" than 65. Yet I'll point out that it isn't more A-like than similar patterns, like the one produced by 15,621,670,449:

01110

10001

10001

11111

10001

10001

10001

It's barely significant whether the cross bar of the A is on the 4th row or on the 5th row. Yet what makes this not a font is the agreement on a standard--just as with ordinary ASCII everyone needs to agree to use the same numbers! Then you can use those numbers to index into a properly stroked font. These bitmaps are a hidden cache of meaning and not meant to be drawn in ordinary circumstances!

Note

I've also got a plan for "Arecibo UNICODE", that would use a larger pair of primes to represent more detail in the glyphs, such as adequately capturing Chinese characters. Once again the intent would not be to draw these bitmaps to the user--they'd be too pixelated. Yet it's interesting to note that you could fall back upon the bitmap on a machine that was missing that font. That way, you'd get something better than the notorious "<?>" that pops up when your system doesn't understand a character today...

What's it good for?

Whether you imagine such a thing could ever be useful...hopefully you at least think it's interesting. Especially if mathematicians who know English letters can consistently figure out short messages from a bare bitstream. A better test would be to avoid using the word "Arecibo" (so as to not give any hint that semiprimes and bitmaps play a part).

What first got me thinking about USCII was the rise of "literal URLs". Search engines prefer the URL to contain a description of what the link is about, such as how this article is called

http://blog.hostilefork.com/can-you-crack-arecibo-ascii. Yet my time as a database programmer had led me to think it was almost always better to use abstract numbers in URLs. So I was confused on whether to embrace this new trend or not.

I started to see how using a purely abstract number is a double-edged sword. On the one hand, you've lost the fluidity to make changes--if the URL for this article had been

http://blog.hostilefork.com/2008/10/20/96/ then I can fix typos without breaking links (what if I'd spelled "arecibo" wrong and wanted to fix it?) On the other hand, imagine that someone hacks my server and puts up some kind of spam that has nothing to do with ASCII at all. In a system which isn't under centralized control, making linkages more "literal" helps protect us from from thinking the person who made the inbound link was purposefully trying to spam us.

This led me to ponder how "pure" the character codes themselves are. Right now we do rely heavily on tables to interpret numbers. If you get [89 69 83] then what do you do if one table says:

89→"Y", 69→"E", and 83→"S"

...while the other table says:

89→"N", 69→"O", and 83→"!"?

Fortunately ASCII is small, but UNICODE has over 100,000 symbols. If the numbers had some truth to them--or in them--your belief would be bolstered that you were at least decoding the message as it was intended.

Acknowledgements

I took the 5x7 font data from the Font Code for PIC CPU Assemblers. (Still, I'll reiterate that it's best to not think of Arecibo ASCII as a "font". Rather, it is a canonization of a font such that its numeric representation becomes a standard for information interchange.)